GPU Clusters at Scale: Powering the Next Generation of High Performance Computing

GPU Clusters at Scale have become the backbone of modern high performance computing environments. As data volumes explode and artificial intelligence models grow larger than ever before, traditional computing infrastructures struggle to keep up. Enterprises, research institutions, and cloud providers are now turning to distributed GPU clusters designed for extreme scalability, faster data processing, and the ability to train highly complex neural networks. This transformation is reshaping industries and opening the door to innovations that were previously impossible due to computational constraints.

Organizations are no longer asking if they should adopt GPU clusters but how quickly and efficiently they can scale them. From edge computing to hyperscale cloud environments, the demand for high throughput workloads has placed GPUs at the center of technological advancement.

The Evolution of GPU Computing

Graphics Processing Units were originally created to handle rendering tasks for gaming and visual applications. Over time, their highly parallel architecture proved ideal for scientific computing, data analytics, and especially deep learning. CPUs process tasks sequentially, while GPUs can run thousands of operations concurrently, significantly accelerating performance for compute heavy workloads.

This shift accelerated when tech giants such as NVIDIA introduced CUDA, enabling developers to program GPUs with greater control. Today, GPUs are foundational in training large language models, climate simulations, genomics analysis, and autonomous driving technology. What started as a niche acceleration tool has evolved into a critical infrastructure component powering the most advanced computational workloads in the world.

Why Scaling Matters in GPU Clusters

The sheer growth of machine learning models and simulation workloads means that a single GPU or standalone server is no longer enough. Scaling GPU clusters enables organizations to combine hundreds or even thousands of GPU devices into a single distributed computing system. When done correctly, workload performance increases dramatically.

Scaling delivers several core benefits

See also: 10 Groundbreaking Technologies to Watch in 2025

Faster Training and Inference

AI models like GPT or diffusion based generative models require billions of parameters. Training these on limited hardware could take months. Scaled GPU clusters reduce the time to hours or days, enabling rapid experimentation and breakthrough discoveries.

Enhanced Data Parallelism

Splitting workloads across multiple GPUs improves efficiency and ensures that datasets of any size can be processed continuously without bottlenecks.

Higher Utilization of Resources

Clusters allow dynamic resource sharing. If a project requires additional GPUs temporarily, resources can be allocated instantly and then reassigned when the workload is completed.

Support for Multiple Concurrent Workloads

Enterprises frequently run batch jobs, inference tasks, and training pipelines simultaneously. A properly scaled cluster ensures stable performance across all tasks without interruption.

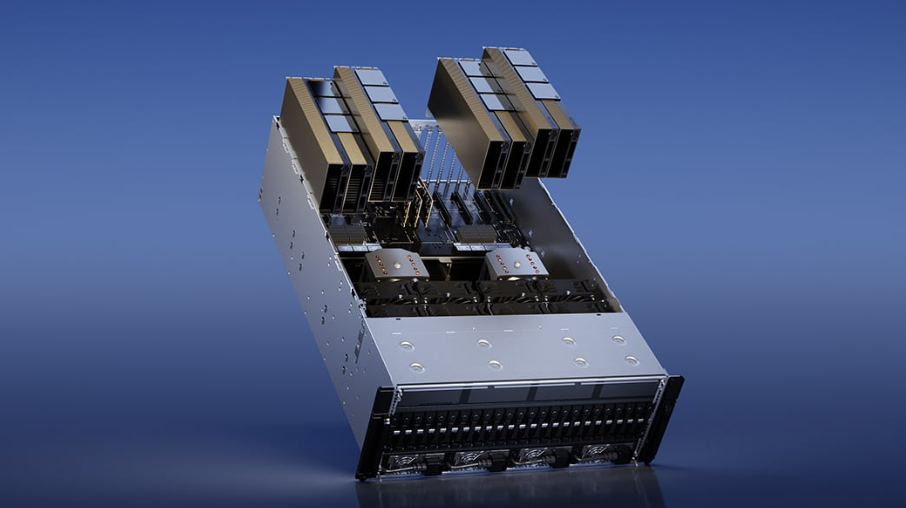

Key Components of GPU Clusters at Scale

Scaling clusters requires more than simply adding more GPUs. To function efficiently, the architecture must be optimized across several interconnected components. These include:

High Performance GPUs

Modern clusters rely on GPUs designed for compute acceleration rather than graphics. NVIDIA’s A100, H100, and AMD MI300 are examples of data center level cards designed for massive neural computation throughput.

Multi Layer Interconnects

Communication speed between GPUs can reduce or accelerate overall performance. Technologies such as NVIDIA NVLink and InfiniBand provide low latency networking for data sharing across nodes during distributed training.

CPU Host Nodes

Even though GPUs perform the intense computation, CPUs play an essential role in orchestrating task distribution, data input, and overall cluster management.

Scalable Storage Systems

With petabytes of data needed for modern AI workloads, scalable storage must support extremely fast data access speeds. Parallel file systems such as Lustre and GPUDirect Storage are commonly used.

Cluster Orchestration and Software Tools

Software frameworks like Kubernetes, Slurm, and Ray ensure that workloads are automated, scheduled, and balanced. Frameworks such as TensorFlow, PyTorch, and NVIDIA NCCL support distributed GPU training.

This combination allows organizations to scale clusters seamlessly and ensure maximum performance without operational delays.

Challenges in Scaling GPU Clusters

While the benefits are enormous, scaling GPU clusters presents several challenges that organizations must consider.

Cost and Infrastructure Complexity

High performance GPUs are costly, often leading to large capital expenditure. Running and maintaining an interconnected GPU cluster requires sophisticated cooling systems, energy supplies, and operational oversight.

Efficient Resource Management

Without proper orchestration, some GPUs may remain idle while others are overloaded. Resource inefficiency directly impacts ROI, productivity, and performance.

Networking Bottlenecks

As clusters scale horizontally across multiple racks or data centers, data transfer latency becomes the biggest obstacle in maximizing distributed processing potential. Networking hardware must scale alongside compute power.

Performance Optimization Complexity

Software frameworks need to be carefully tuned to ensure each GPU contributes equally to the workload. Layer synchronization, memory management, and load balancing require advanced optimization strategies.

Overcoming these challenges requires smart cluster design backed by scalable automation tools and infrastructure planning.

Cloud Based GPU Clusters at Scale

Many organizations prefer not to manage physical infrastructure. Cloud providers offer ready to deploy GPU instances that enable instant scalability without upfront investment. These clusters are elastic, allowing businesses to scale up or down depending on workload demands.

Major cloud platforms offering GPU scaled solutions include:

- Amazon Web Services

- Google Cloud Platform

- Microsoft Azure

- Oracle Cloud Infrastructure

Cloud infrastructure supports training experiments, real time inference, and scientific simulations that require immediate access to large compute capacity. Startups and research teams particularly benefit from this pay as you go structure.

The flexibility of cloud deployment helps accelerate innovation by removing barriers to high performance computing resources.

Industry Use Cases Transforming the World

GPU clusters are driving breakthroughs across sectors.

Artificial Intelligence and Machine Learning

Training multimodal AI, foundation models, and reinforcement learning systems relies heavily on distributed GPU acceleration.

Genomic Research

Processing sequences and running models for personalized medicine would take years on CPU only systems. GPUs reduce this to minutes or hours, dramatically improving discovery speed.

FinTech and Quantitative Analysis

High frequency trading models and risk analysis simulations demand real time processing power that scaled GPU clusters deliver.

Manufacturing and Engineering Simulation

Digital twins, CFD simulations, and robotics training use parallel processing to analyze thousands of mechanical variables simultaneously.

Autonomous Transportation

Cluster accelerated training enables perception algorithms to improve rapidly and deploy safely in real world environments.

Every year, as GPU clusters scale further, industries discover new ways to solve huge computational problems.

The Future of GPU Clusters at Scale

Technology leaders are already preparing for the next stage of cluster evolution. Future advancements will focus on:

Higher Density and Energy Efficiency

Next generation GPUs are reducing power consumption while increasing AI throughput. Data centers will become more energy smart and densely packed.

Hybrid Deployment Models

Workloads that require low latency or privacy will run on edge GPU clusters while high volume training workloads run in the cloud. Hybrid models allow the best of both worlds.

AI Driven Cluster Management

Automation tools powered by machine learning will monitor workloads, predict usage patterns, and optimize performance autonomously.

Quantum and GPU Integration

As quantum computing evolves, GPU clusters may integrate with quantum processors to solve highly complex optimization and cryptography problems.

These advancements will enable greater performance, lower operational overhead, and even more innovative breakthroughs.

Conclusion

GPU Clusters at Scale represent one of the most important advancements in computational infrastructure. They empower organizations to push the boundaries of artificial intelligence, simulation science, and real time data analytics. As workloads continue to expand, the demand for scalability and optimized performance will only grow. Cloud providers, chip manufacturers, and enterprises are continuously innovating to build faster, smarter, and more sustainable clusters capable of powering the future of technology.

Investing in scalable GPU infrastructure today positions businesses for competitive advantage tomorrow. The world is moving quickly toward automation and intelligent systems. With GPU clusters powering next generation workloads, the future has endless possibilities waiting to be unlocked.